Cady Tianyu Xu

Google DeepMind · LLM Agents · Multimodal AI

Hello! 👋 I’m Cady, a researcher at Google DeepMind. 🌀 My work focuses on multimodal LLM agents that move beyond open-ended generation toward closed-loop execution, bridging the gap between high-level reasoning and autonomous task completion in real-world environments.

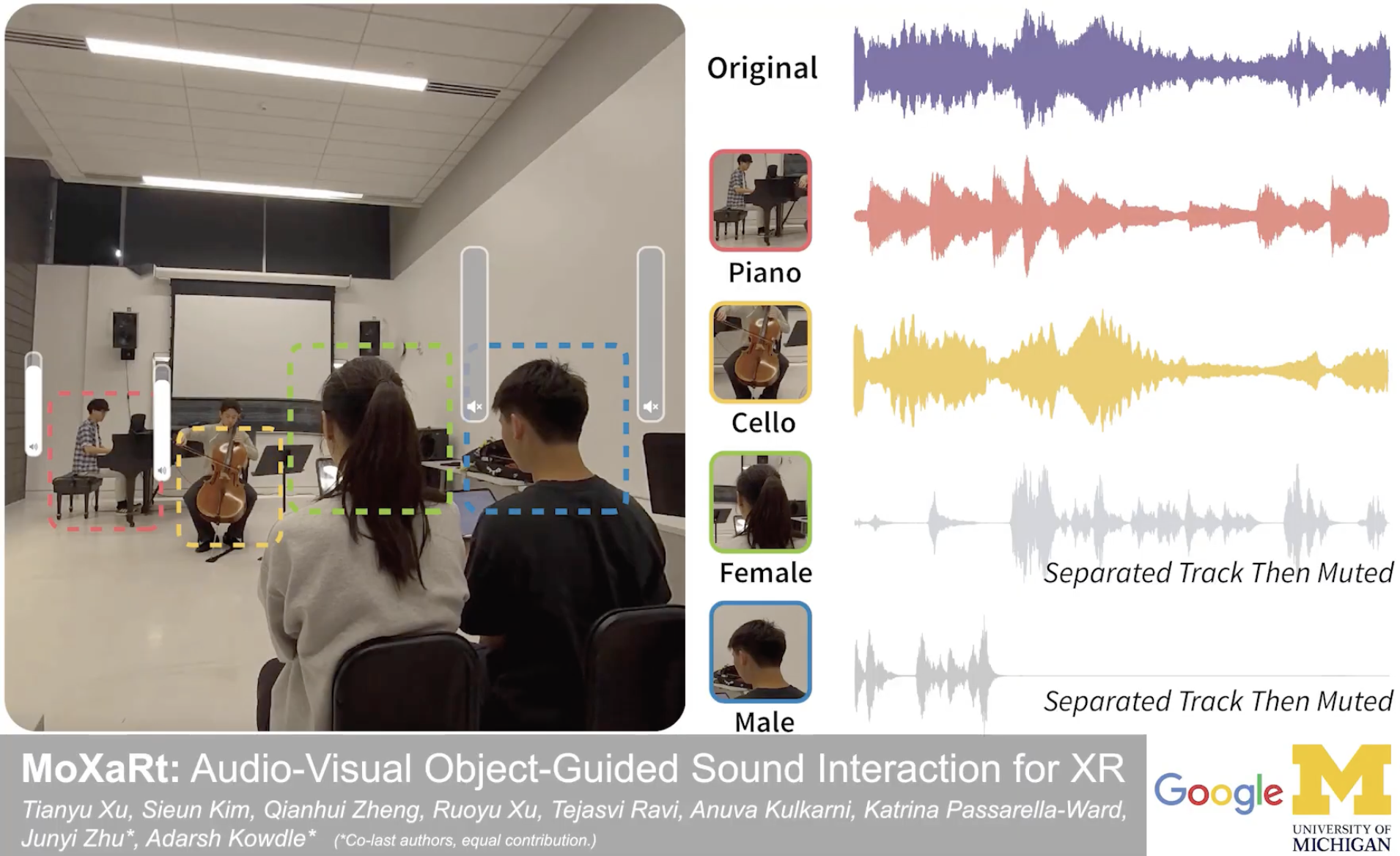

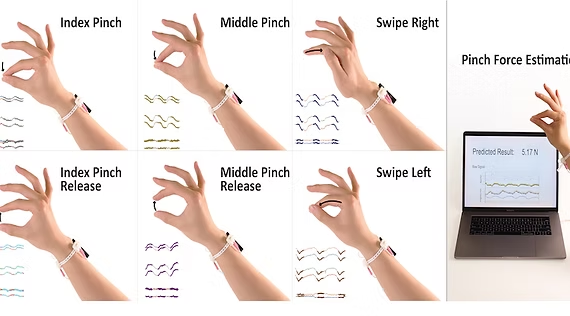

Previously, I was a machine learning engineer on the Google XR team, where I developed perception models and multimodal LLMs for immersive, context-aware interaction. 🕶️ My recent work also spans multimodal human-centered systems and interactive AI, with publications at UIST and CHI.

Prior to my roles at Google, I was a Software Engineer at Apple. I received my Bachelor’s degree in both Computer Science and Political Science from UC Berkeley. 🐻

I’m always excited to connect with researchers and practitioners working on LLMs, XR, or autonomous agents. Reach out via LinkedIn or email, or check out my Google Scholar for potential collaborations! 🐑

news

| Apr 08, 2026 | Presenting MoXaRt at CHI 2026 in Barcelona! 🇪🇸 Come find us at the Barcelona International Convention Centre, P1 — Room 128 on Fri, Apr 17 at 9:00 AM. 🎤 |

|---|---|

| Mar 21, 2026 | Thrilled to be a speaker at the 2026 Silicon Valley Women in Engineering Conference! I’ll be presenting “Sound, Space and Agency: Building Context-Aware Wearable Systems” in the Emerging Technologies C2 · UX & Wearable Technology session, Sat 3/21 at 1:45–2:45 PM. 🎙️ |

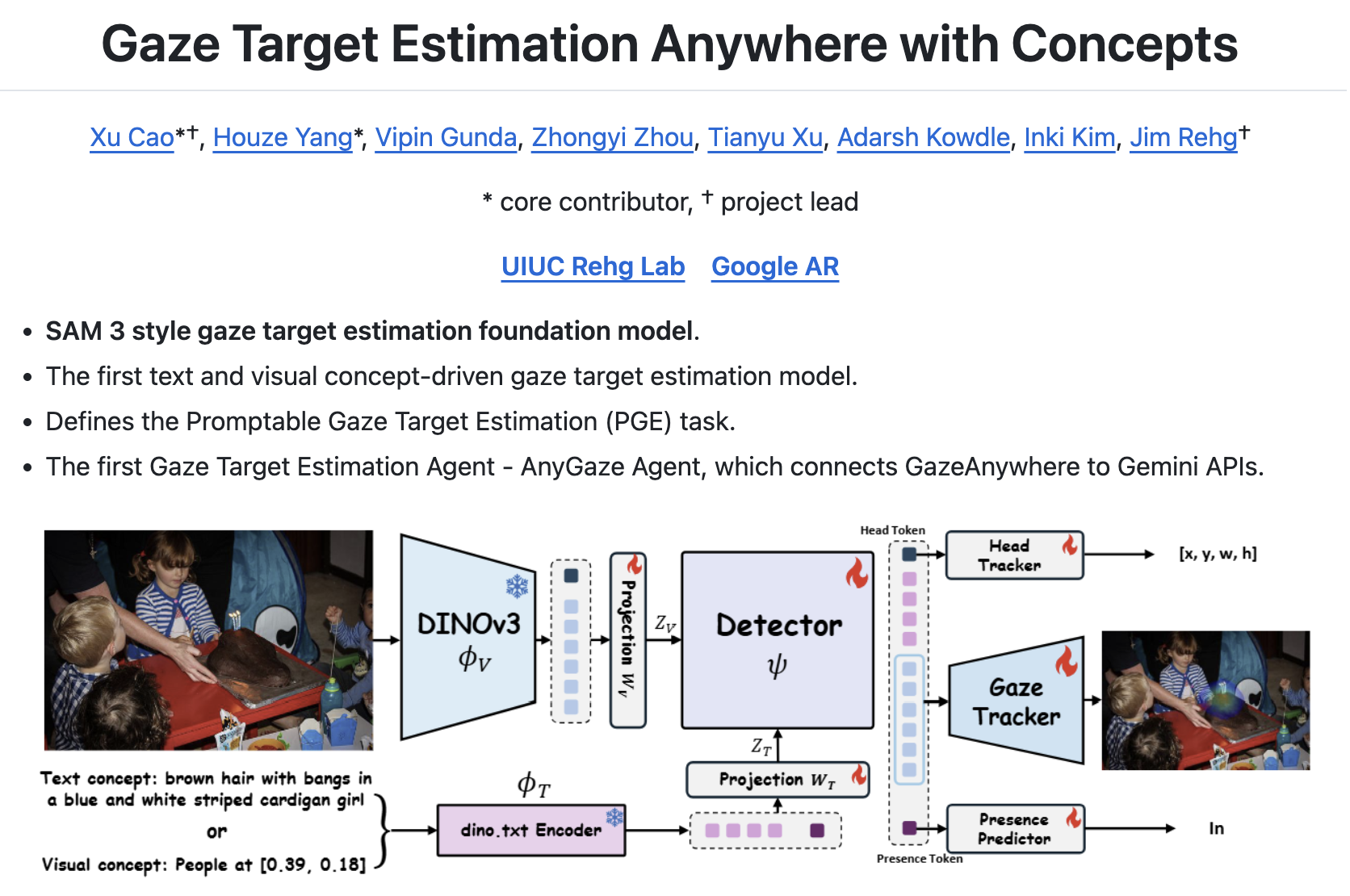

| Mar 08, 2026 | Our paper GazeAnywhere has been accepted to CVPR 2026! 🎉 The first foundation model for promptable gaze target estimation. 👀 |

selected publications

- GazeAnywhere

In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2026)

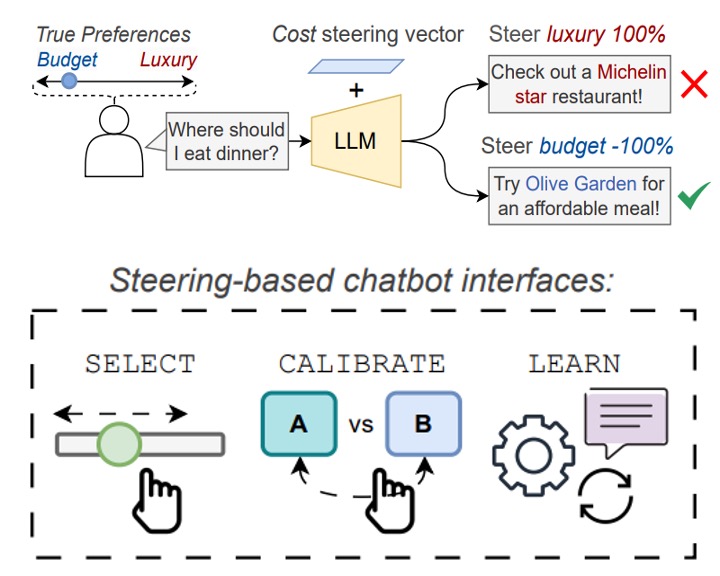

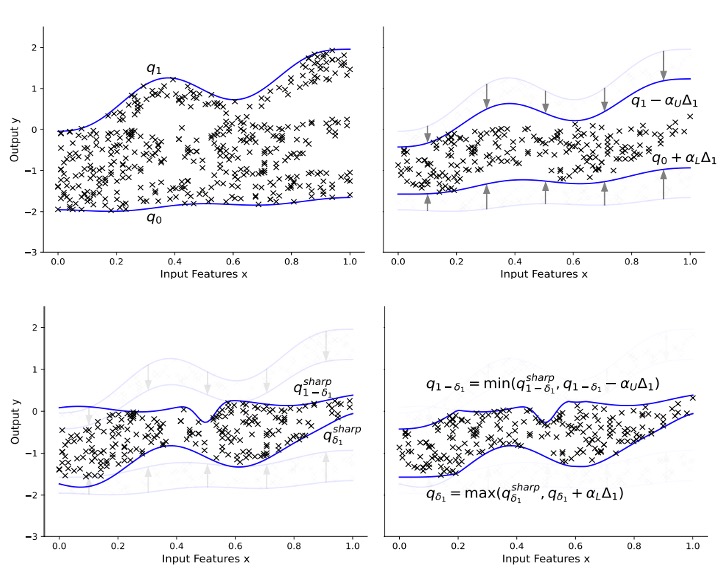

In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2026) - CaliPSO

In ICML 2025 Workshop on Methods and Opportunities at Small Scale

In ICML 2025 Workshop on Methods and Opportunities at Small Scale